OpenAI has signed a $200 million contract with the Pentagon to develop artificial intelligence (AI) tools for military use. The one-year contract, announced in June 2025, will focus on creating “prototype frontier AI capabilities” for both warfighting and enterprise applications.

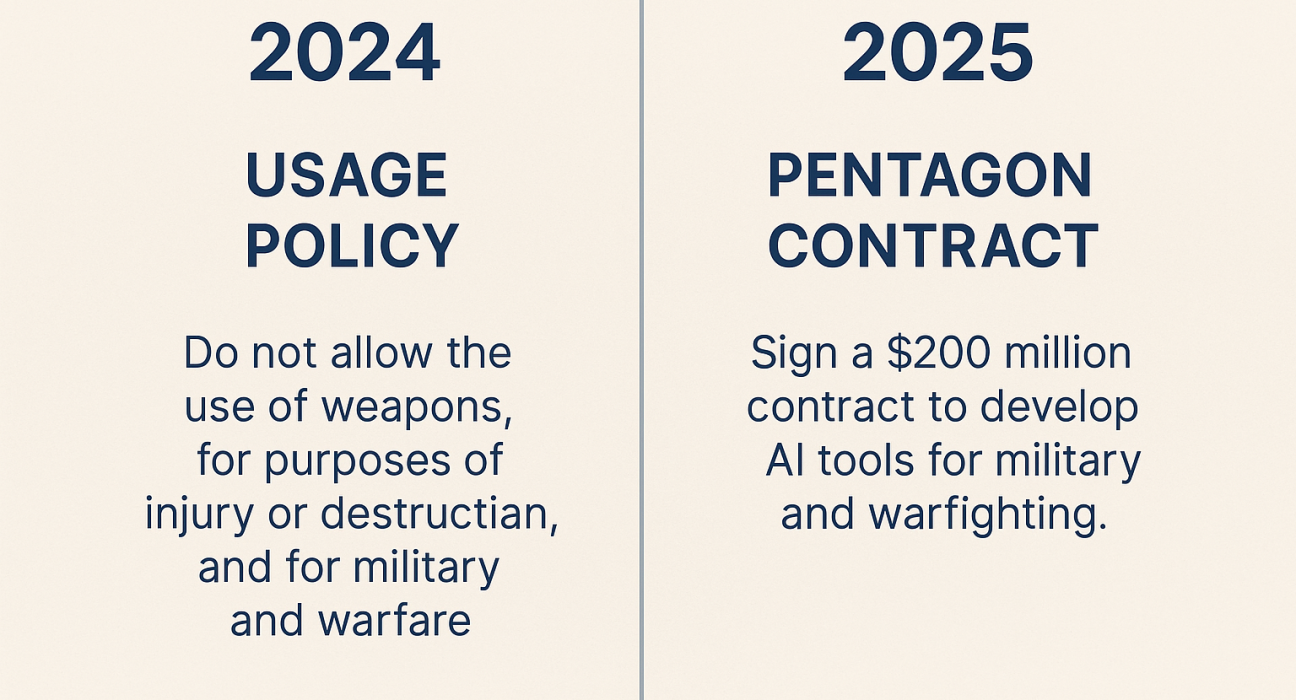

The deal marks a major policy shift for OpenAI, which previously prohibited its technology from being used in weapons development or warfare. In early 2024, its usage policies clearly banned military applications. But by late 2024, the company revised those policies to allow national security projects under the caveat that they should not “harm yourself or others.” CEO Sam Altman later defended the pivot, stating that OpenAI is proud to engage in national security.

The article frames this as a case of “ethical whiplash” — a company that branded itself as advancing human flourishing now building AI for military operations.

Privatization of military decision-making

The report argues that this is not just outsourcing software but outsourcing judgment itself. Unlike traditional Pentagon contracts for jets or logistics, AI systems are designed to analyze, recommend, and potentially decide. Critics warn this represents a structural change: military cognition and decision-making delegated to private corporations.

The Pentagon already spends nearly half its budget on contractors ($415 billion in 2022). But AI differs because it operates as an evolving black box, trained on private data and methods shielded from public oversight.

Broader context

- Other tech companies are joining the defense AI boom:

- Anduril is partnering with OpenAI on national security missions.

- Anthropic is working with Palantir and Amazon.

- Scale AI has contracts for the Pentagon’s “Thunderforge” program.

- This trend is fueled by Trump’s proposed $1 trillion defense budget, the largest in U.S. history.

Accountability concerns

The article stresses the lack of democratic oversight. Congress approves budgets, but actual applications of AI systems are determined by private companies. Once deployed, neither the public nor government has transparency into how the models work, what data they are trained on, or how to hold them accountable if they malfunction or cause unintended consequences.

Ethics experts, such as Margaret Mitchell of Hugging Face, warn that once technology is shared with defense, control over its future use is effectively lost.

Main Points

- OpenAI signed a $200M Pentagon contract for military AI prototypes.

- Marks a reversal of earlier bans on military use in OpenAI’s policies.

- Raises concerns over privatization of military judgment and decision-making.

- Fits into a broader Silicon Valley shift toward defense contracting amid record Pentagon budgets.

- Critics highlight a democracy deficit — limited oversight, transparency, or accountability for algorithmic warfare.

Projections

Potential Positive Outcomes (Pro):

- May strengthen U.S. military capabilities and efficiency in national security.

- Could accelerate innovation, with military research spin-offs benefiting civilian industries.

- Partnerships may push for higher safety and testing standards in advanced AI.

Potential Negative Outcomes (Con):

- Risks eroding democratic oversight by shifting critical decisions into private hands.

- Potential misuse or malfunction of AI systems could have grave consequences (civilian casualties, misjudged threats).

- Ethical credibility of AI companies like OpenAI may decline, fueling distrust.

- Sets precedent for normalizing AI militarization, blurring lines between civilian tech and military infrastructure.

Sources

- Winsome Marketing – War, Inc.: OpenAI’s $200M Pentagon Paydaywinsomemarketing.com

- OpenAI usage policy revisions (2024–2025)winsomemarketing.com

- Pentagon contracting data, defense budget proposalswinsomemarketing.com

- Expert commentary (Margaret Mitchell, Hugging Face)winsomemarketing.com